As 2023 draws to a close, it’s time to reflect on TrueFoundry’s journey over the past year. This reflection isn’t just a celebration of our achievements but also an acknowledgment of the challenges we’ve navigated, appreciation of the opportunities we have been presented with and the learnings we’ve embraced. Instead of focusing on operational details, we will walk you through a chronological journey of learnings and realizations indexed on our thesis on MLOPs- and how things played out in reality.

I am a personally a space enthusiast, so I find the traditional analogy of startups as rocket ships befitting. If I had to describe the timelines imagining we are building a rocket ship then- 2022 was the year of putting together the engine and taking a test ride, whereas 2023 is when we set the course for the stars and secured the thrusters for our cosmic odyssey! I am very excited to walk you through our journey of 2023, but let me set some context about TrueFoundry and the beginning of the year 2023.

TrueFoundry and year 2022

TrueFoundry is building a cloud-agnostic PaaS on Kubernetes, that standardizes the training and deployment of Machine learning models using production-ready, developer-friendly APIs.

In 2022, we spent time building our team, developing the plumbing layer of the platform across different cloud providers & worked closely with our first few design partners. We developed the core service deployment layer and built the UI, CLI and Python-SDK driven experiences and had experienced the joy of our first customer dollar! We had realized that selling in MLOPs space is difficult because most companies had built “something that worked” and the resistance to change was very high.

On the other hand, we had really validated the problems we were solving-

- Machine learning models weren’t making to production

- ML deployments were delayed because of model handoffs

- Data scientists faced major infra-management issues

- K8s adoption in ML world was minimal

- Machine learning models following a different deployment pipeline from full-stack software was very broken

- Companies were spending 2–10x of costs working with Machine Learning than really necessary

By this time, we had identified that we were solving a major problem with a huge economic impact but the challenge was- this wasn’t an urgent problem in the customer’s mind. Our learning from this episode-

Solving a major pain point is critical for sustainability, but you can’t fabricate urgency- the customer and the market will decide that. It’s the business world’s equivalent of — laws of physics. Don’t fight that- keep looking!

Kicking off 2023

With that, we started 2023, where we had multiple GTM experiments to run based on our learnings of working with design partners. Giving a few concrete examples of the experiments we ran-

- Cross-cloud hypothesis: 84% of enterprises are cross-cloud and running workloads across multiple cloud-vendors is really difficult. While this was a huge time & cost sink organizationally, we couldn’t find a single point-of-contact repeatably who had this as their top of mind problem.

- K8s driven ML workloads: The full-stack software world had already started reaping the benefits of scale of K8s and the ecosystem around it and it was clear that ML will only see these accentuated. While we found some teams who had K8s migration as a priority, it always took backseat compared to user-facing work for our customers.

- Cost Optimization: We noticed most of our design partners saved 40–50% of cloud-infra cost working with our platform and we targeted the organizations that had cost-reduction as their goal for the year. Of course this resonated but we noticed that the team in-charge of reducing cost was DevOps mostly, and their charter included one time cost-reduction and had little to no control over developer workflows which will again surface the problem.

Okay, so a number of partly successful or failed experiments through which we further noticed how prevalent the problems we were trying to solve were but we still could not find our path to identifying- a narrow customer persona, with the exact some problem that is urgent, and can be repeatably identified externally.

This happened, until, everybody in the world wanted to work with LLMs and we were presented with a well-timed opportunity.

LLMs aggregated the demand for us. Everybody wanted to work with LLMs and everyone now “urgently” faced the same problems we were trying to solve.

Uniformity of LLMOPs, MLOPs & DevOps

Accounting for a few of those problems here in the context of LLMs here-

- Demo to production: Literally, everyone could write a few prompts and make a swanky GPT-4 based demo. Every data scientist would agree that building a quick RAG using Langchain is like a couple of hours of work. Challenge is for these demos to become production ready which requires putting many pieces together in a reliable way. You need to build workflows which makes it easier for data scientists to think of these apps in a production setting from the get go.

- A100s — where are you?: We don’t know of a single developer who worked with LLMs on their infra who hasn’t complained about unavailability of GPUs, especially A100s. How can you increase the probability of them getting these GPUs? Expose them to multiple clouds or data centers- but it’s a mess to deal with a multi-cloud architecture if you don’t have the right tools.

- Hosting open source models: Hosting these large language models require close handholding of infrastructure which increases the dependence of the Data Science teams on the infra team. If we could make the right platform where the DS teams are reasonably “infra independent”- this problem would be curtailed for relatively simple use cases like out-of-box model hosting.

- Long running Fine tuning jobs- Most of our customers are fine tuning LLMs and some are pretraining as well. Now, these are long running expensive jobs where you can’t afford to waste many GPU-cycles pertaining to human errors. Best practices of logging, monitoring & experiment tracking are critical here. For example, if you don’t save model checkpoints by default and your training job gets killed 2 days into the execution, thats a massive waste of time and resources.

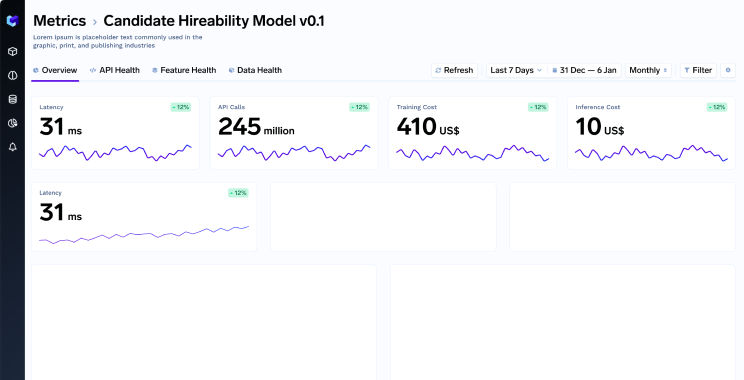

- Cost Monitoring- LLMs are neither cheap to train, nor cheap to run. Many companies are unit economics negative currently when serving their users using LLMs assuming someday the costs will go down. The dependence on cloud platforms like Sagemaker which charge a premium on the EC2 instances, and seldom leverage spot-instances further exacerbates the problem. Besides, there is little to no visibility of infra costs to the developers who are the owners of the services. While this problem seems pronounced in the case of LLMs, all the logic mentioned above is fundamental to all software.

- Vector DBs & Secrets Management: For building LLM based applications, developers found themselves grappling with multiple applications like different Vector DBs, Label studio and a variety of API services. Each of them require time to set up and infrastructure to allow sharing of API keys across the organisation with the right level of monitoring. Data scientists don’t find themselves equipped with the right tooling to deal with this and the only solution is to “slow down, until safe solutions are implemented”.

These are some examples but there’s many other similar use cases- like setup of async inferencing, GPU backed notebooks, shared storage drive across notebooks, cold start times of large docker containers etc. that companies found hard to solve for.

Turns out, that all of our customers that come to use for LLMs are experiencing some of these features like reducing dependency of Data Scientists on Infra, or saving costs, or scaling applications across cloud providers avoiding lock-ins realize the same is applicable for other Machine Learning models which are not LLMs, and realistically is also applicable for the rest of the software stack. We see this cross-pollination of use cases for customers who started using us for deploying software or classic ML models and are now seeing benefits with LLMs.

Conclusion

This strengthens our belief that the time we spent in building our core infrastructure, with an opinionated perspective that ML is software and should be deployed similarly, that K8s will win in the long term and that companies will want to avoid vendor lock-ins be it cloud or other software vendors- is paying off for us and our customers.

So concluding using the rocket ship analogy-

If 2023 was the year where we charted the territory and got the thrusters ready, we are looking forward to a 2024 where we will ignite the boosters to propel this rocket ship!!!

Wishing you all a very Happy New Year on behalf of the entire TrueFoundry team! Welcome 2024.