We speak with many enterprises and business leaders who are trying to figuring out their strategy for using LLMs in this pedestal of AI - should we go OpenAI or OpenSource LLMs? There are many good blogs that showcase the pros and cons of different approaches with neutral sentiment. We have an opinionated stance here-

- If you think, LLMs are going to be crucial to your business, you need to invest in using Open Source LLMs, on your own infra- yesterday!!!

- If you think, LLMs are not going to be crucial to your business, think harder. If you still get the same response- think one more time. After that, maybe you are right and just use OpenAI or other commercial LLMs for some quick use-cases you want to solve.

Obviously, if your business, technology DNA & scale demands pre-training LLMs from scratch, please invest in that. But most companies will not fall in this bucket- and that’s why we have one clear recommendation-

Your last chance to stay in the AI Game is to adopt Open Source LLMs now and run them on your infrastructure!

The Importance of Open Source LLMs

We believe, companies that invest in open source LLMs and leverage them internally are poised to benefit from improved data security, greater control over their technology, and faster iteration times. But those who ignore this trend risk falling behind, losing out to competitors who have already begun building their AI muscle using smaller, more efficient models. Let’s dive into the details here-

Data Security & Moat

Most companies are stuck with internal discussions around setting up data security policies- what data is okay to be sent to commercial LLM providers? Where am I crossing a compliance boundary vs where am I losing my competitive moat? Yes, you can prevent OpenAI to not directly use your chat data for fine-tuning but someday some developer will make this mistake.

While a lot of this is happening, agile competition are already making progress using Open Source LLMs and winning their customer’s trust. They are launching features fast, learning fast and at the same time building a competitive moat for the long term using Open source LLMs.

Iterate to improve

Many, including Google is anticipating that smaller fine tuned open source models might win over large, generic, static very large models. This is intuitive because very large language models are almost impossible to iterate on. You get one-shot at it or your cost and iteration time multiplies.

The teams that have started investing in building this muscle are at a massive positional advantage because this allows them rapid iteration and improvement using small models at a fraction of the cost of the large models! Once this gap is established, it’s very hard to narrow down because there is so much learning is gained in this process.

Controlling your destiny

Invoking OpenAI APIs is easy but there are concerns around latency & uptime. This will likely improve with time but what if they decide to charge a whole lot more for the latency guarantees? What if hosting fine-tuned models doesn’t fit their long term business model and they decide to discontinue it altogether?

Community contributions

Very large language models evolve at the speed at which the dozens or hundreds of people working at OpenAI / Google can contribute while prioritizing the need of the millions. On the other hand, the entire community of open source developers are rapidly building many versions of smaller models — some with low-rank optimizations, some that run on mobile, some that can be personalized, some which are larger and instruction tuned. There is literally no limit to this innovation and personalization. You can choose which model works best for which use case of yours.

Additionally, there is inherent advantage of being able to run fast and cheap if you are using multiple smaller models specific to a given task.

Why isn’t everyone using Open Source LLMs?

Such a strong recommendation begs the question- if it’s that important, why isn’t everyone doing it? First of all, an increasing number of people are already investing more and more of their time and resources towards understanding the landscape and building on top of open source LLMs. So the axiom that everyone not doing it is becoming untrue by the day :) But, there are some inherent challenges associated with using Open Source LLMs and running them on your infra, compared to using their commercial counterparts-

Lack of technical expertise

Most teams today don’t have the multi-faceted expertise to fine tune and host large-language models internally. Smart people can always figure it out eventually but figuring out this complicated modeling & infra at the same time, while new tools and models are getting released everyday is just hard and time consuming.

Usage terms

Many technical and business leaders are confused on which LLM, dataset or library is okay to use commercially vs not? For example, Vicuna which seems to be on Apache 2.0 license, is trained on Llama which is not commercially available and makes it impossible to use and very non-trivial to realize that this could be a violation. See details that we wrote about in a previous blog here.

Memory and time constraints

Most reasonable size large language models (13B+ parameters) won’t fit or cannot be fine-tuned on commonly available GPUs because of memory constraints. If you decide to optimize the memory, which is non-trivial, your training time takes a hit. There are a lot of techniques around gradient management, low-rank approximation, mix-precision serving, accelerated training & deployment, model specific optimizations using different libraries- all of these are hard to quickly learn & implement. This leaves teams throwing hardware at the problem and babysitting the GPUs for every successful run.

GPU availability & management

Cloud providers require GPU quotas which are frequently constrained and expensive and often come in 8 GPU card batches which could be suboptimal from a cost perspective. Most teams aren’t familiar with how to distribute a model across multiple GPUs because they won’t fit on one and run them optimally.

Besides, there is always a pressure to do things fast because enterprises are worried if they don’t make their own LLM announcement soon enough, their competition might get the first-movers advantage and wow their customers. On a separate note, this concern isn’t baseless because we have seen it happen with a bunch of customers that we speak with.

What is TrueFoundry doing about it?

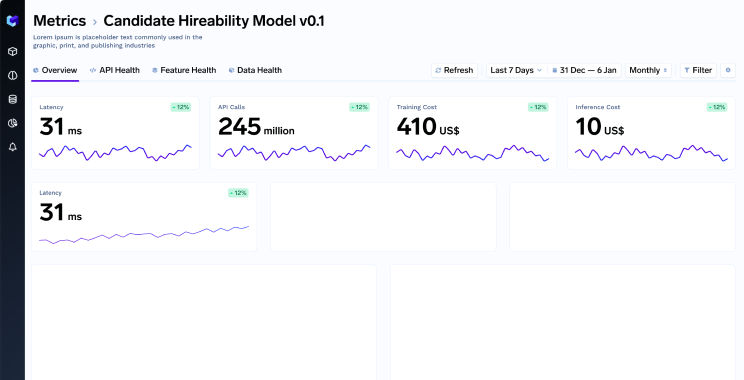

At TrueFoundry, some of these problems are core to what we are solving. Our platform is designed to run on your infra, ensuring complete data security and builds meaningful abstractions where we hide irrelevant complexities of the infrastructure while still keeping the control in the hands of the developer. As a fast-evolving space, AI and LLMs require constant learning and adaptation. TrueFoundry team is dedicated to helping you navigate this landscape through our product, guidance, suggestions, and tailored solutions.

Investing in open source LLMs and using them internally is a strategic move that will help your company stay ahead of the curve. TrueFoundry can help accelerate your AI initiatives and maintain a competitive advantage in an ever-changing landscape. Don’t fall behind — embrace open source LLMs and secure your place at the forefront of AI innovation.