We are back with another episode of True ML Talks. In this, we again dive deep into LLMs, LLMops and Generative AI, and we are speaking with Michael Boufford.

Michael is the CTO of Greenhouse who joined as the first employee about 11 years ago, and so wrote the first lines of code and got to build the company up to where it is today.

📌

Our conversations with Mike will cover below aspects:

- Organizational Structure of ML Teams at Greenhouse

- How LLMs and Generative AI Models are Used in Greenhouse

- Navigating Large Language Models

- Understanding Prompt Engineering

- LLMOps and Critical Tooling for LLMs

Watch the full episode below:

Organizational Structure of Data Science and Machine Learning Teams at Greenhouse

Greenhouse's data science and machine learning teams have evolved with the company's growth, transitioning from generalists to specialized roles. Key aspects of their organizational structure include:

- Data Engineering and Platform: A dedicated team manages data engineering, data warehousing, and machine learning feature development. They support marketing efforts and handle deployment and operations for code and models.

- Product Data Science: This team focuses on supporting product decision-making through innovative projects, data analysis, and insights driving product development.

- ML Engineering: Greenhouse has an ML engineering team specialized in building scalable and reliable production-ready models for various product use cases.

Additionally, a Business Analyst team addresses business-related questions and provides insights.

Infrastructure management is the responsibility of a separate Infrastructure team, overseeing components like Kubernetes and AWS. Data stores have a dedicated team for management.

How LLMs and Generative AI Models are Used in Greenhouse

Here are the several use cases where these models have been employed within Greenhouse's operations.

- Job Similarity and Data Processing: Greenhouse has been using LLMs, including Bard and GPT-2, to analyze and process various aspects of job-related data. These models assist in determining similarities between different job listings, as well as parsing and processing raw resume data. The focus lies in efficient data processing and labeling efforts related to job descriptions.

- RAG Architecture for Faster Answers: Greenhouse has recently explored the use of GPT-4 for innovative use cases. One of these involves implementing the RAG (Retrieval-Augmented Generation) architecture to provide rapid responses to user queries. By leveraging generative models, Greenhouse aims to enable users to obtain answers to complex questions that previously required manual report generation. The generative model acts as a translator, converting English queries into a query language that interacts with the data store, and then translating the response back for consumption.

- Reporting and Business Intelligence (BI): With access to vast amounts of text data in the form of job descriptions and resumes, Greenhouse is well-positioned to leverage LLMs and generative models for reporting and BI purposes. Greenhouse already offers pre-built reports, a custom report builder, and a data lake product. The company envisions utilizing LLMs to answer a wide range of reporting questions related to recruiting, such as sourcing performance, interview processes, hiring status, budget analysis, and more.

Navigating Large Language Models: Addressing Problems and Embracing Self-Hosting

Problems with Large Language Models

While ChatGPT, powered by models like GPT-4, offers impressive results, there are still some challenges and concerns associated with its use. Here are a few problems that arise with ChatGPT:

- Reliability: GPT-4 is still relatively nascent and may not be completely reliable for deployment in production infrastructure. As a result, it may not be advisable to rely solely on GPT-4 for critical systems that require consistent performance and reliability.

- Terms of Service and Data Privacy: As with any AI model, there are concerns about how data is handled and whether it is used for training purposes. Trusting that data will be handled securely and not leaked or misused can be a significant issue, especially when dealing with sensitive data like personally identifiable information (PII).

- Self-hosted Models: Using smaller, self-hosted models can provide advantages in terms of reliability, cost, and performance. By hosting the models within your own infrastructure, you have more control over input/output parameters, monitoring, and security configurations. This approach can mitigate risks associated with relying on external services.

- Talent and Infrastructure: Hosting even smaller language models requires specialized skills and infrastructure. It may be necessary to build the necessary expertise and resources in-house to effectively manage and utilize these models. While cloud vendors like Azure, Google, and Amazon are developing their own large language models, they may not have extensive experience in handling untrusted inputs and the specific challenges associated with them.

- Data Security: Protecting sensitive data is crucial, especially when processing PII. One approach is to train models without directly exposing the raw data. For example, using lossless hashes of values instead of the actual data can help maintain privacy while still capturing meaningful relationships. Experimenting with different approaches and ensuring data security will be essential.

Advantages of Self-Hosted Models

- Better model performance: Smaller models can provide improved performance in answering questions.

- Cost reduction: Computation costs are lower when using smaller models, without the additional overhead of a third party.

- Control and accountability: Self-hosting models allows for more control and accountability, as it runs within your own infrastructure.

- Data security and privacy: Self-hosting mitigates the risk of data escape and ensures better control over input and output parameters.

- Monitoring and security: Self-hosted models enable better monitoring and the ability to set up security configurations according to your needs.

- Preferred for enterprise SaaS applications: For features that can be served by self-hosted models and meet the required performance standards, it is preferable to choose self-hosting.

- Viability of GPT-4: The reliability, data security, and data privacy aspects of GPT-4 are still being assessed and need further evaluation before considering it for production systems.

Evaluation and Decision-making

When considering whether to invest in self-hosted models or rely on large commercial language models, leaders should carefully evaluate the following factors:

- Use Cases: Assess whether the problem at hand can be effectively addressed by smaller models in terms of cost efficiency and computational effectiveness.

- Long-Term Cost Implications: Consider the potential cost savings of hosting your own model compared to accessing very large models, which may provide diminishing returns.

- Control and Autonomy: Weigh the benefits of having greater control and autonomy over the infrastructure and direction of the model, as well as the ability to customize and specialize the model to specific use cases.

- Investment and Learning Opportunities: Acknowledge that building and training smaller models may require initial investment in terms of team resources, experimentation, and fine-tuning. However, this investment can lead to optimized models tailored to specific use cases and enhance the team's knowledge and understanding.

Understanding Prompt Engineering

Prompt engineering has become a topic of debate within the field of large language models (LLMs). It involves crafting effective prompts to elicit desired responses from the model. Here are some key points to understand the concept and its implications:

- Prompt Engineering as a Distinct Role: Prompt engineering may become a recognized job title or specialized role within the field, as experts optimize prompts and manipulate neural networks effectively.

- Engineering Approach to Prompts: Prompt engineering involves applying the scientific method to generate predictable outputs by tweaking and refining prompts to achieve desired results.

- Distinction from Casual Prompt Usage: Simply copying and pasting prompts without deeper understanding or modifications does not qualify as prompt engineering.

- Multifaceted Nature of Prompt Engineering: Prompt engineering requires a comprehensive understanding of how prompts influence neural networks and the specific information they capture, going beyond linguistic skills.

- Lack of Deterministic Programming: LLMs introduce complexity due to variations in models, training data, and changing behaviors, making prompt engineering challenging.

- Potential Efficiency and Predictability Improvements: Deepening understanding of LLMs may lead to more efficient activation of neural network parts, resulting in more predictable and consistent results.

- Visualizing the Layered Encoding: Transformer architectures in LLMs encode information at different layers, similar to how CNNs process images. Prompt engineers can explore activating specific layers to influence generated outputs.

- Tooling Landscape and LLMOps: Attention is shifting to the tooling landscape surrounding LLMs, referred to as LLMOps, which includes development, deployment, and management practices. The term is still evolving.

LLMOps and Critical Tooling for LLMs

LLMOps and the tooling landscape around large language models (LLMs) are gaining attention.

When it comes to prompt management, quick data handling, labeling feedback, and other essential tasks, certain tools are expected to play a critical role as LLM usage expands. Some key considerations include:

- Factor Databases: Searchable databases like Minecon will be crucial for retrieving relevant context to feed back into the neural network. Accessing relevant information empowers prompt engineering and optimization.

- Project Frameworks: Projects like LangChain provide coding frameworks that make it easier to implement a wide range of functionalities, contributing to efficient LLM usage.

- Integration and Infrastructure: LLMs are typically part of broader programs, necessitating effective integration and management of various components. Wiring different parts together to achieve desired outcomes is vital and may require expertise in infrastructure and memory management.

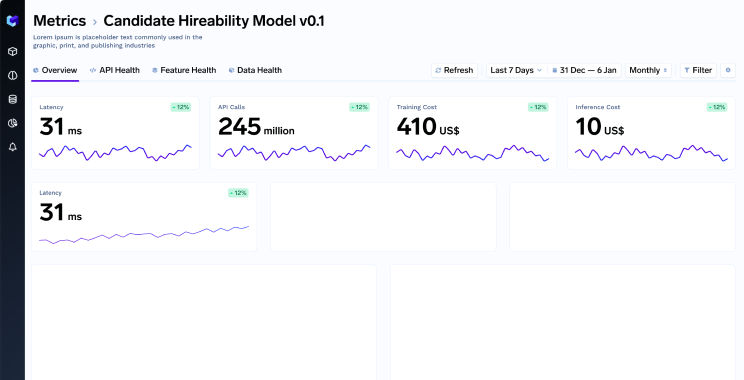

- Monitoring and Maintenance: Traditional machine learning practices, such as monitoring for regressions, performance evaluation, and infrastructure capacity assessment, remain relevant in the context of LLMs. Ensuring proper infrastructure and capacity support is crucial for optimal performance.

- Prompt Storage: Saving prompts for future use requires thoughtful consideration. Although various options, such as databases, caching, or file storage, can be used to store text and even parameterizable text, designing meaningful ways to store prompts is an ongoing area of exploration.

- Memory Optimization: Dealing with the memory requirements of large models can be challenging. Managing GPU RAM usage becomes crucial, especially when fine-tuning models that increase memory requirements significantly. Optimizing models for specific GPU types or latency requirements requires expertise and tooling support.

- Infra-Management Tooling: As organizations run LLMs on their own cloud infrastructures, new challenges arise in terms of managing the infrastructure. Tooling support is needed for tasks like GPU auto-scaling, ensuring uptime, optimizing costs, and building scalable systems that align with specific business requirements.

- Developer Workflows: Tooling that enhances developer workflows in working with LLMs is essential. Simplifying complex processes and providing intuitive interfaces can help accelerate adoption and make LLMs more accessible to a broader range of users.

- Educating the Community: With the LLM field still in an exploratory phase, companies like TrueFoundry have an opportunity to educate and guide the community on available tools, best practices, and solutions to common challenges.

📌

Evaluating Large Domain Models

In the context of human involvement in evaluation, the "human in the loop" approach is commonly employed in serious use cases with LLMs. Human validation is crucial to assess the performance of the model and validate its output. Even during the fine-tuning process of GPT models, human involvement played an essential role.

For less critical use cases where there is room for some margin of error, a cost-effective approach involves using larger models to evaluate the responses of smaller models. Multiple responses generated by the smaller models can be compared and rated by a larger model, allowing for the establishment of metrics to measure performance. While this approach incurs some costs, it is generally more economical compared to relying solely on human efforts.

Staying Updated in the Ever-Evolving World

Staying updated in the ever-evolving world of LLMs and machine learning can be challenging. Here are some effective approaches to staying informed and gaining knowledge:

- AI Explained Videos: Watching AI explained videos on platforms like YouTube provides a convenient way to grasp the key findings and results of academic papers without extensive reading. These videos summarize complex concepts, saving time and effort.

- Online Communities: Engaging with online communities, such as Hacker News and machine learning subreddits, offers insights, discussions, and updates on emerging trends and technologies in the field.

- Hands-on Experience: Actively participating in practical applications of LLMs is crucial for gaining a deeper understanding of their potential and limitations. By experimenting and exploring the capabilities, one can enhance their knowledge.

- Accessibility of APIs: Unlike in the past, where machine learning required revisiting complex math concepts, today's landscape is more API-driven. Pre-built APIs and libraries enable developers to start experimenting and building applications without the need to relearn advanced math.

- Programming Skills: Learning specific library methods and resolving environment issues are valuable skills in implementing LLMs effectively.

Read our previous blogs in the True ML Talks series:

Keep watching the TrueML youtube series and reading the TrueML blog series.

TrueFoundry is a ML Deployment PaaS over Kubernetes to speed up developer workflows while allowing them full flexibility in testing and deploying models while ensuring full security and control for the Infra team. Through our platform, we enable Machine learning Teams to deploy and monitor models in 15 minutes with 100% reliability, scalability, and the ability to roll back in seconds - allowing them to save cost and release Models to production faster, enabling real business value realisation.