Welcome to this series on building and setting up scalable Machine Learning infrastructure on a Kubernetes environment. In this series, we will cover various topics related to the development, deployment, and management of machine learning models on a Kubernetes cluster.

Scalable Machine Learning Infrastructure: Best Practices

Machine Learning Operations, commonly known as MLOps, refers to the practices and techniques used to manage the lifecycle of machine learning models. Scalable Machine Learning Ops Infrastructure enables organizations to build, deploy and manage models at scale, thereby increasing the return on investment (ROI) on their data science efforts.

The benefits of a scalable MLOps infrastructure include:

- Faster time-to-market for machine learning models

- Improved scalability and flexibility for machine learning workflows

- Consistent and repeatable model deployments

- Improved collaboration between data scientists and IT operations teams

- Improved model accuracy and reliability

Airbnb invested heavily in setting up a Scalable MLOps practice right from the beginning. It used machine learning models to improve their search ranking algorithms via User data driven search, which resulted in better Search experience and an estimated 10% increase in bookings. Airbnb also used machine learning models to provide personalised recommendations to their users, which helped improve user experience and engagement!

Challenges in MLOps Infrastructure Setup

Organizations that rely on Virtual Machines (VMs), whether on AWS, Google Cloud Platform (GCP), or Microsoft Azure, for setting up their ML training and deployment infrastructure may face several challenges:

- Scalability: VMs have limited scalability options, and organisations may need to manually configure additional instances to handle the load, resulting in performance issues and increased costs.

- Resource Management: VMs require manual configuration for resource allocation, and organizations may need to estimate the resources required for their ML workloads. This can lead to underutilization of resources or resource constraints, impacting the performance of the ML models.

- Version Control: Managing different versions of ML models can be challenging when using VMs. Organizations may need to manually manage different versions of the model, which can be time-consuming and error-prone.

- Security: VMs may have security vulnerabilities, and organizations may need to manually configure security features such as firewalls and intrusion detection systems to protect their ML models and data.

- Monitoring and Logging: Monitoring the performance of ML models and the underlying infrastructure in a VM-based infrastructure can be challenging and it could become difficult to track status of individual components, identify bottlenecks, and troubleshoot issues.

Kubernetes in MLOps

Kubernetes is an popular open-source container orchestration platform that automates the deployment, scaling, and management of containerized applications. It provides a unified API and declarative configuration that simplifies the management of containerized workloads, enabling organizations to build scalable, resilient, and portable infrastructure for training and deploying ML Models. Kubernetes offers several benefits over raw VMs, including better resource utilization, simplified version control, and efficient scaling. Moreover, Kubernetes provides built-in security features and centralized monitoring and logging capabilities, which can help organizations ensure the security and reliability of their ML infrastructure. Kubernetes is a great choice for organizations looking to build Scalable Machine Learning Pipelines for a longer term.

Managed Kubernetes on AWS,GCP, Azure

Cloud providers(AWS, GCP and Azure) offer managed Kubernetes services (EKS, GKE and AKS respectively) that enable organizations to easily set up, configure, and manage Kubernetes clusters, eliminating the operational overhead associated with running and scaling Kubernetes. Additionally, cloud providers offer integrations with other cloud services such as storage, databases, and networking, which can further simplify the deployment and management of ML workloads on Kubernetes. By adopting Kubernetes either directly or through managed service, organizations can build a flexible and scalable MLOps pipeline that can handle their growing ML workloads and enable faster time-to-market for their ML models.

Benefits of Kubernetes for MLOps

Let's dive into the benefits of using Kubernetes for ML Training and Deployment pipelines in more details

- Resource Management: Kubernetes enables organizations to easily provision and manage the resources required for running ML training jobs and deploying models. It can automatically scale up and down the resources based on the workload, which ensures efficient resource utilization and reduces costs.

- Simplified Deployment with best SRE Practices: Kubernetes provides a unified API and declarative configuration that simplifies the deployment of ML models. Organizations can easily deploy models in a scalable and resilient manner, with built-in support for rolling updates and canary deployments, which can help minimize downtime and improve reliability.

- Flexibility and Portability: Kubernetes provides a flexible and portable infrastructure that can support various deployment scenarios, including on-premises, cloud, and hybrid environments. This enables organizations to easily move their ML workloads across different environments and avoid vendor lock-in.

- Better Cost and Resource Utilization: Kubernetes enables organizations to efficiently utilize resources, by packing multiple ML workloads on a single node, which helps minimize infrastructure costs. Moreover, Kubernetes can leverage other specialized hardware to accelerate ML training and inference, which can further improve performance.

Example Use Case 1: AirBnb

Airbnb, the online marketplace that allows people to rent out their homes or apartments to travelers. With millions of users and a vast amount of data to analyze, Airbnb needed a robust and scalable machine learning infrastructure to analyze user behavior, improve search rankings, and provide personalized recommendations to users.

To achieve this, Airbnb invested in building an MLOps infrastructure on Kubernetes, which enabled their data science team to develop and deploy machine learning models at scale. With Kubernetes, Airbnb was able to containerize their models and deploy them as microservices, which made it easier to manage and scale their infrastructure as their needs grew.As a result, Airbnb was able to improve their search rankings and provide more relevant recommendations to their users, which led to increased bookings and higher revenues. In addition, the company was able to improve the efficiency of their data science workflows, allowing their team to focus on developing more advanced machine learning models.

Example Use Case 2: Lyft

Lyft, a large provider of Transportation as a SaaS (TaaS) initially built their ML infrastructure on top of AWS using a combination of EC2 instances and Docker containers. They used EC2 instances to provision virtual machines with varying levels of CPU, memory, and GPU resources, depending on the specific ML workload requirements. They also used Docker containers to package and deploy their ML workloads and ensure consistency across different environments.

However, as Lyft's ML workloads grew in complexity and scale, they faced several challenges including consistency across different environments and teams and decided to migrate their ML infrastructure to a Kubernetes based infrastructure using KubeFlow initialyl and then in-house platform. By migrating to a Kubernetes based infrastructure, Lyft was able to build a more efficient and scalable ML infrastructure, which helped them accelerate their ML development and deployment pipelines. Additionally, they were able to take advantage of the benefits of Kubernetes, such as auto-scaling and efficient resource utilization, to optimize their ML workloads and reduce infrastructure costs. They used EKS from AWS as their managed Kubernetes Service!

Overall, investing in MLOps infrastructure on Kubernetes allowed Airbnb and Lyft to achieve significant productivity gains and improve their bottom line, demonstrating the value that scalable MLOps on top of Kubernetes can bring to organizations looking to leverage machine learning at scale.

Challenges with Kubernetes in MLOps

Despite the benefits, using Kubernetes for ML Infrastructure comes with its own set of challenges and complexities:

- Resource Management when dealing with large datasets or complex models: Because of the requirements of large amount of computing resources, including GPUs, memory, and storage, it can be challenging to ensure efficient allocation in a way that doesn't conflict with other workloads running on the Kubernetes cluster.

- Integration of Different Tools: Organizations may use different ML tools such as TensorFlow, PyTorch, and scikit-learn, each with its own requirements and dependencies. Integrating these tools and dependencies with the Kubernetes infrastructure can be complex and time-consuming.

- Security Concerns for Machine Learning Models: Ensuring that ML models are protected from unauthorized access or attacks is critical. While Kubernetes provides various security features, such as Role-Based Access Control (RBAC), network policies, and container isolation, but configuring them correctly can be challenging, when dealing with sensitive data like personal information or financial records.

- Monitoring and Logging in a Distributed Environment: Monitoring the performance of ML Models and the underlying infrastructure is critical for ensuring optimal performance. However, in a distributed Kubernetes environment, it can be challenging to track the status of individual components, identify bottlenecks, and troubleshoot issues. Organizations need to set up monitoring and logging tools that provide real-time visibility into their machine learning workflows and Kubernetes infrastructure.

While there are challenges, by following best practices and leveraging the capabilities of Kubernetes, organizations can overcome these challenges and build scalable, secure, and reliable MLOps infrastructure.

Conclusion

Kubernetes for Machine Learning offers numerous advantages for organizations seeking to optimize their machine learning workflows. While there are challenges to setting up and managing MLOps infrastructure on Kubernetes, such as resource management, security, and monitoring, a thorough understanding of Kubernetes and best practices can help overcome these obstacles.

In this series of ML on Kubernetes, we will try to cover various topics related to building and setting up ML infrastructure on a Kubernetes environment, including the following:

- Containerisation and orchestration using Kubernetes

- Distributed Training on Kubernetes

- Kubernetes based model serving and deployment

- GPU based ML Infrastructure on Kubernetes

- Kubernetes CI/CD Pipeline

- Kubernetes Monitoring for MLOps

and more..

By adopting these best practices and harnessing the power of Kubernetes, organisations can scale and deploy machine learning models with consistency, reliability, and security. This, in turn, would lead to faster time-to-market, improved collaboration between data science and IT operations teams, and better ROI on their data science investments.

Checkout how Gong has built a Scalable Machine Learning Research infrastructure on Kubernetes

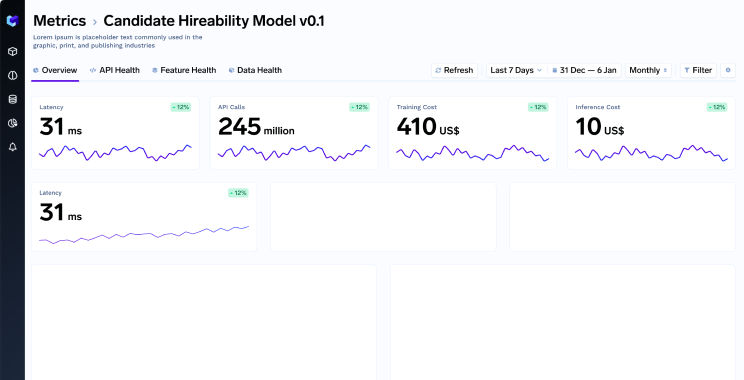

TrueFoundry is a ML Deployment PaaS over Kubernetes to speed up developer workflows while allowing them full flexibility in testing and deploying models while ensuring full security and control for the Infra team. Through our platform, we enable Machine learning Teams to deploy and monitor models in 15 minutes with 100% reliability, scalability, and the ability to roll back in seconds - allowing them to save cost and release Models to production faster, enabling real business value realisation.