We are back with another episode of True ML Talks. In this, we again dive deep into MLOps pipelines and LLMs Applications in enterprises as we are speaking with Labhesh Patel.

Labhesh was a CTO and Chief Scientist at Jumio Corporation, where he worked in leveraging ML / AI in identity verification space. He has held multiple leadership positions, both in engineering and science roles in the past, with leading organizations.

📌

Our conversations with Labhesh will cover below aspects:

- Interesting Research Papers and Patents

- Utilizing AI to solve business problems

- Building the MLOps Pipeline

- Breaking Down Silos: Building Cohesive MLOps Teams for Success

- Navigating Cloud Provider Roadblocks

- Future of Generative AI

Watch the full episode below:

Interesting Research Papers and Patents

Research Papers

- Attention is All You Need: This paper introduced the transformer network, which revolutionized natural language processing and laid the foundation for many LLMs like ChatGPT.

- Visual Question Answering with Segmented Guided Attention Networks: This paper proposed a novel method for answering questions about images by utilizing segmentation maps and attention mechanisms. While superseded by newer techniques, it highlights the importance of focusing on specific areas of an image for accurate answers.

- CycleGen: This paper explores the idea of generating text summaries based on user reviews and product characteristics. It predates ChatGPT and demonstrates the potential for LLMs to assist with writing tasks.

Patents

- Voice over IP Buffering and Negotiation Protocol: This patent arose from a simple bug fix that improved voice quality in VoIP calls. It highlights the potential for innovation in seemingly mundane solutions and the importance of considering defensive patenting strategies.

Utilizing AI to solve business problems

There are a lot of challenges and opportunities in transforming manual processes with AI. Here are some key takeaways:

Start with the Business, Not the Buzz

- Identify the core business problem: Why automate? What are the quantifiable benefits (scalability, cost reduction, speed)?

- Manage expectations: AI isn't magic. Communicate what's achievable and set realistic performance metrics.

- Understand data's role: 90% of the work lies in data management, collection, and quality assurance. Clean data is vital for accurate models.

Building the Right Path

- One step at a time: Focus on a single, high-impact use case to prove the concept and build your pipeline.

- Compliance first: Ensure proper data consent and usage before even touching a single byte.

- Metrics matter: Track relevant metrics (precision, recall, error rates) to evaluate success and guide further decisions.

- Teamwork is key: Assemble a team with expertise in ML engineering, data management, and product development.

Beyond the First Step

- Iterate and evolve: Continuously evaluate, improve, and expand your AI solutions based on data and feedback.

- Embrace the learning curve: Be prepared to invest in talent and education to build a culture of AI understanding within your organization.

Important things to keep in mind

- Beware of the 99% trap: High accuracy on isolated cases can mask larger problems. Pay attention to overall performance and error rates.

- Think statistically: Metrics like precision and recall provide a more nuanced picture of AI performance than simple accuracy percentages.

By prioritizing business needs, focusing on data quality, and building a strong team, you can navigate the complexities and unlock the true potential of AI to transform your operations.

Building the MLOps Pipeline

For anyone building complex ML systems, there are some things you can keep in mind.

Embrace Cloud-First, But Remain Agile

- Leverage your cloud provider's built-in MLOps tools like AWS SageMaker for fast initial setup.

- Avoid vendor management and compliance hurdles by staying within the cloud ecosystem.

- Move beyond native offerings when limitations arise, seeking out specialized solutions like open-source platforms or vendors.

Importance of Data Quality

- Recognize that cloud providers often neglect data quality, requiring additional internal systems or third-party services.

- Prioritize automated data cleaning and validation to ensure model accuracy and performance.

Architectural considerations

- Model building vs. production: Consider separate teams for model development and deployment, with distinct skill sets and ownership.

- Structure for scalability and agility: Design a flexible architecture that can accommodate new tools and integrations as the pipeline evolves.

Breaking Down Silos: Building Cohesive MLOps Teams for Success

In the fast-paced world of MLOps, collaboration is king. But too often, teams become fragmented, with data scientists building models in isolation and engineers struggling to deploy and maintain them. The result? Slow progress, missed opportunities, and frustrated stakeholders.

So how do we break down these silos and build MLOps teams that thrive?

Bringing everyone together

Imagine a cross-functional team of 8-10 individuals, each with unique expertise: product managers, data engineers, DevOps, security, ML engineers, QA, and even customer support. This diverse group, united by a common goal (e.g., reducing fraud), becomes a powerful force for innovation and efficiency.

Here's why this approach works:

- Shared ownership: When everyone feels responsible for the entire lifecycle of a model, there's no "over the fence" mentality. Issues get tackled collaboratively, and solutions are optimized for real-world deployment and maintenance.

- Informed decisions: Data engineers understand ML needs, and ML engineers appreciate deployment realities. This cross-pollination of knowledge leads to better model selection and feature engineering, avoiding the pitfalls of "research-perfect" models that are impossible to deploy.

- Faster iterations: Close collaboration fosters communication and agility. The team can quickly experiment, refine, and iterate on models, maximizing the impact of their efforts.

Tackling skill gaps for building such a team

It is of the utmost importance to do targeted hiring. You need data engineers with a strong understanding of ML pipelines and ML engineers who appreciate software engineering principles. This combination of diverse skills is the secret sauce to a high-performing MLOps team.

Breaking down silos isn't just about structure, it's about culture. Encourage open communication, celebrate diverse perspectives, and create an environment where everyone feels empowered to contribute. By doing so, you'll build a cohesive MLOps team that can turn your ML dreams into reality.

Navigating Cloud Provider Roadblocks

There are a lot of potential roadblocks you can encounter when heavily relying on a Cloud Provider. In such scenarios, it is very important to be able to pivot when such a roadblock arises.

- Don’t be afraid to explore alternatives: When cloud providers hit limitations, look for specialized vendors or open-source solutions to fill the gaps.

- Proactive communication matters: Don't hesitate to voice your concerns directly to cloud providers. Feedback can lead to improved collaboration and access to exclusive solutions.

- Adaptability is key: Be prepared to adjust your approach based on emerging technologies and changing provider offerings.

Here are some common challenges that can arise

Challenge 1: Super-regulated data access

When dealing with sensitive data (PII, healthcare records), strict regulations like GDPR and CCPA come into play. Cloud providers, while compliant with general standards, might not offer specific tools for secure access and audit trails.

The potential solutions to these are:

- Alternative vendors: Look for companies specializing in highly regulated environments and offering granular access control and auditability features.

- Open-source solutions: Consider open-source tools and customize them to address specific compliance needs.

Challenge 2: Proprietary features & limited access

Sometimes, cloud providers hold back specific features or release them on their schedule, leaving clients waiting for crucial functionalities.

The potential solution to this is to be proactive in communicating with your Point of contact for that cloud provider.

Giving direct feedback to the POC and communicating the blockers you face can sometimes land you and your team early access to private beta programs, ensuring that you don't miss out on future solutions.

Remember, even with roadblocks, a proactive and adaptable mindset can turn challenges into opportunities in the ever-evolving world of cloud-based MLOps.

Future of Generative AI

Generative AI, particularly LLMs (Large Language Models), is all the rage. However, currently, LLMs are in a "hype phase", praised for their magical abilities to handle diverse tasks. Developers resort to throwing API calls at LLMs, leading to issues like rate limiting and high costs.

Challenges for Enterprise Adoption

- Cost and scalability: Large models are expensive and computationally demanding, making them unsuitable for widespread enterprise use.

- Model safety and bias: Enterprise environments require model safety and control over potential biases, which can be difficult with LLMs.

- Inference time: LLMs struggle with latency, causing delays that hinder productivity and user experience.

The Future: Small Language Models to the Rescue?

There might be a shift towards SLMs, trained for specific tasks and domains within enterprises.

This "routered architecture" would direct queries to the appropriate SLM for faster and more efficient responses.

Smaller models also address cost and scalability concerns, making them more accessible to businesses.

Transition Triggers and Considerations

The transition will likely happen gradually, driven by the practical limitations of LLMs and the increasing availability of effective SLMs.

Cost reduction and improved latency will play key roles in accelerating the adoption of SLMs.

Read our previous blogs in the True ML Talks series:

Keep watching the TrueML youtube series and reading the TrueML blog series.

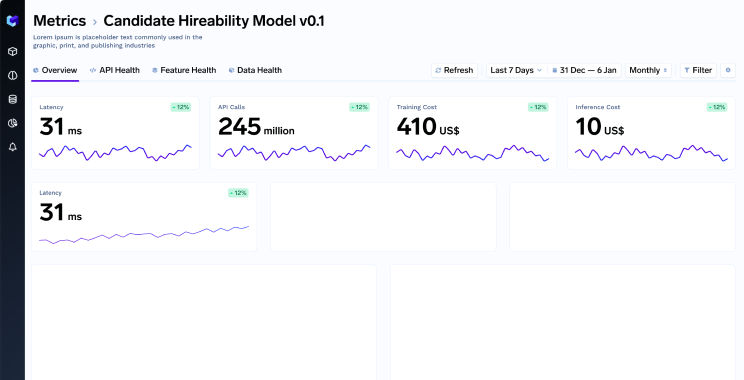

TrueFoundry is a ML Deployment PaaS over Kubernetes to speed up developer workflows while allowing them full flexibility in testing and deploying models while ensuring full security and control for the Infra team. Through our platform, we enable Machine learning Teams to deploy and monitor models in 15 minutes with 100% reliability, scalability, and the ability to roll back in seconds - allowing them to save cost and release Models to production faster, enabling real business value realisation.